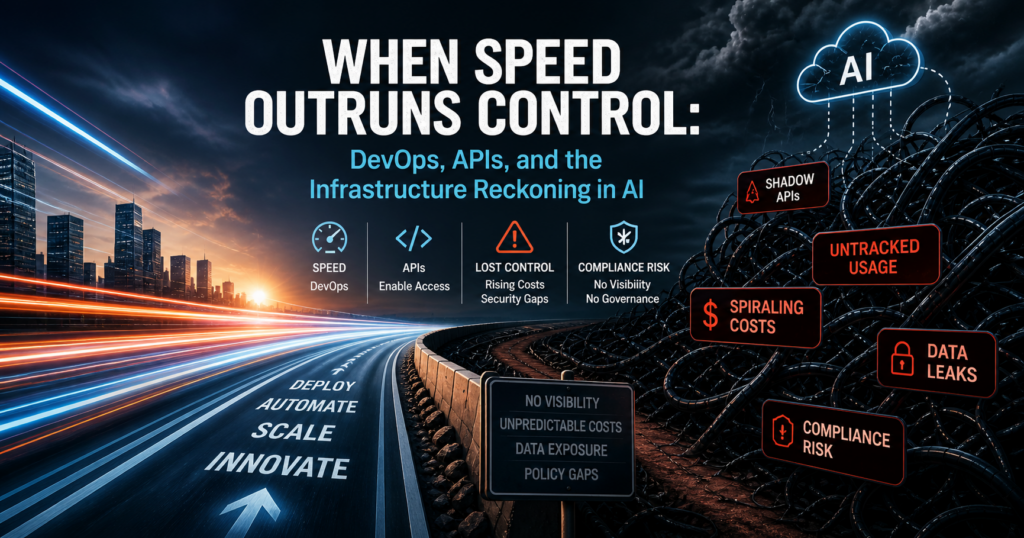

When Speed Outruns Control: DevOps, APIs, and the Infrastructure Reckoning in AI

The shift that happened quietly

Over the last few years, DevOps teams have become the largest consumers of infrastructure without most organisations fully noticing the impact. It started with cloud adoption, accelerated with APIs, and has now exploded with AI.

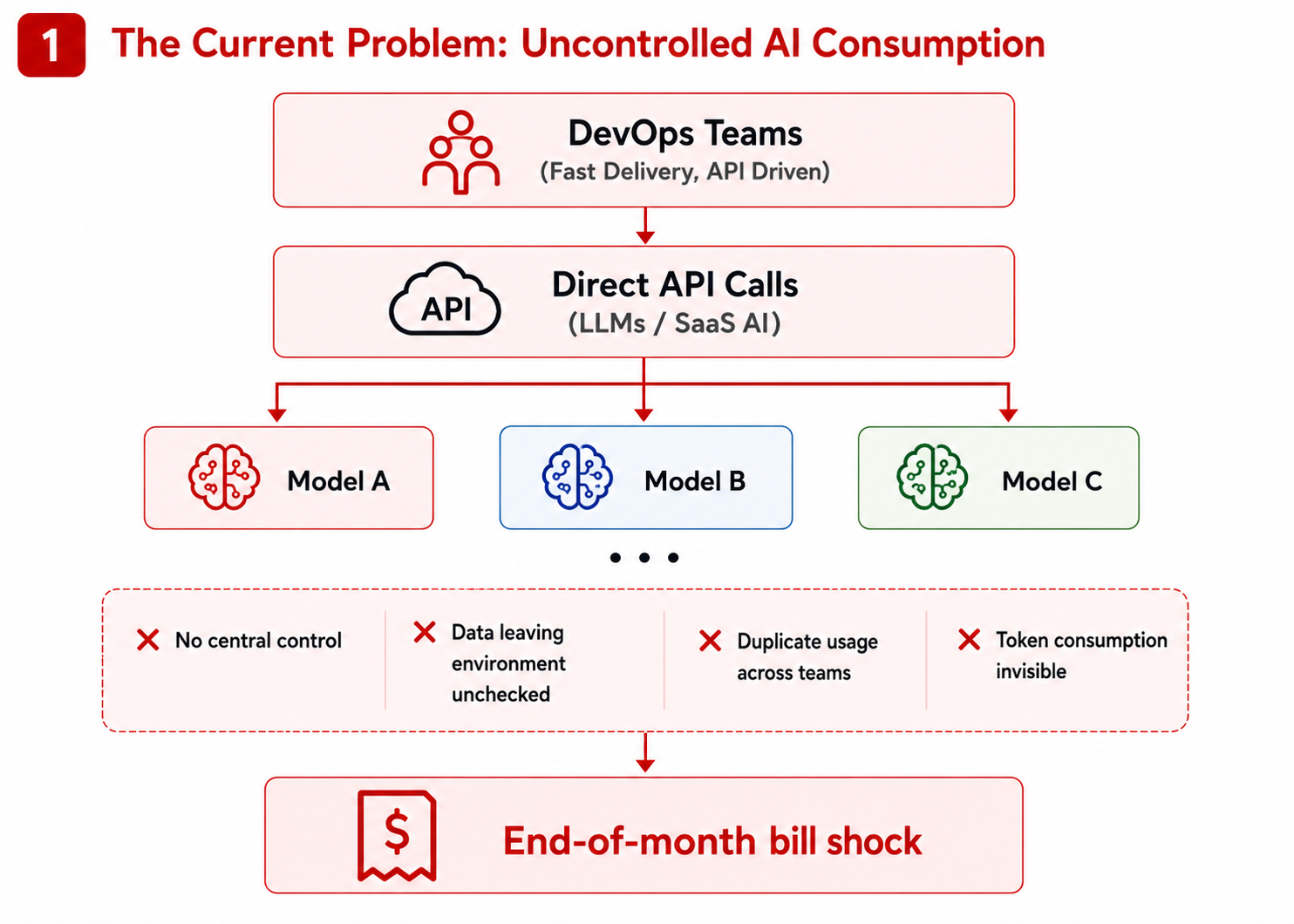

Getting access to a model is as simple as calling an endpoint. Scaling usage is just a matter of sending more requests. The barrier to entry is almost zero, which is exactly why it has spread so quickly across development teams.

But ease of use has masked something important. Consumption has grown faster than understanding.

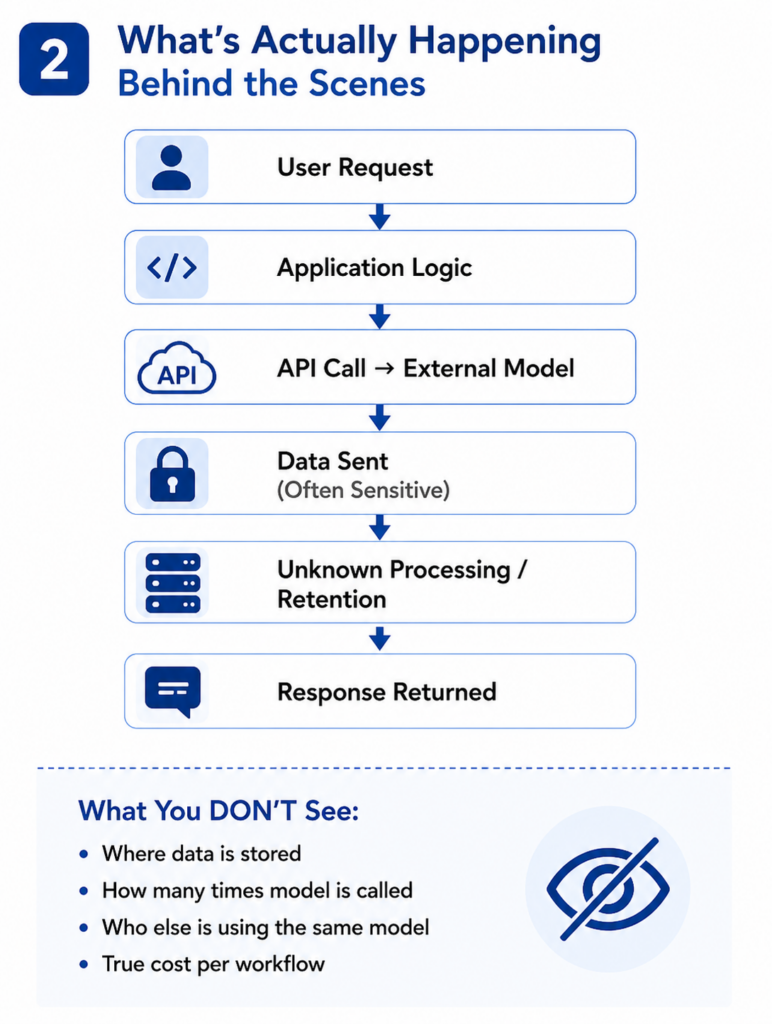

When developers integrate cloud-based AI models into pipelines, they are not thinking about networks, data boundaries, or long-term cost. They are solving a problem in front of them. That is their job.

The issue is what sits behind that API call.

Every request can include sensitive data. Every response depends on an external service. Every interaction has a cost attached to it. And that cost is rarely visible until it is too late.

This is where many organisations are now finding themselves in a familiar situation. At the end of the month, the bill arrives. Token usage has spiked. Multiple teams have been calling different models, often for testing, prototyping, or duplicated workloads. There is no clear ownership, no optimisation, and no easy way to trace what created the spend.

It is not unusual to see large bills driven by experimentation that was never governed in the first place.

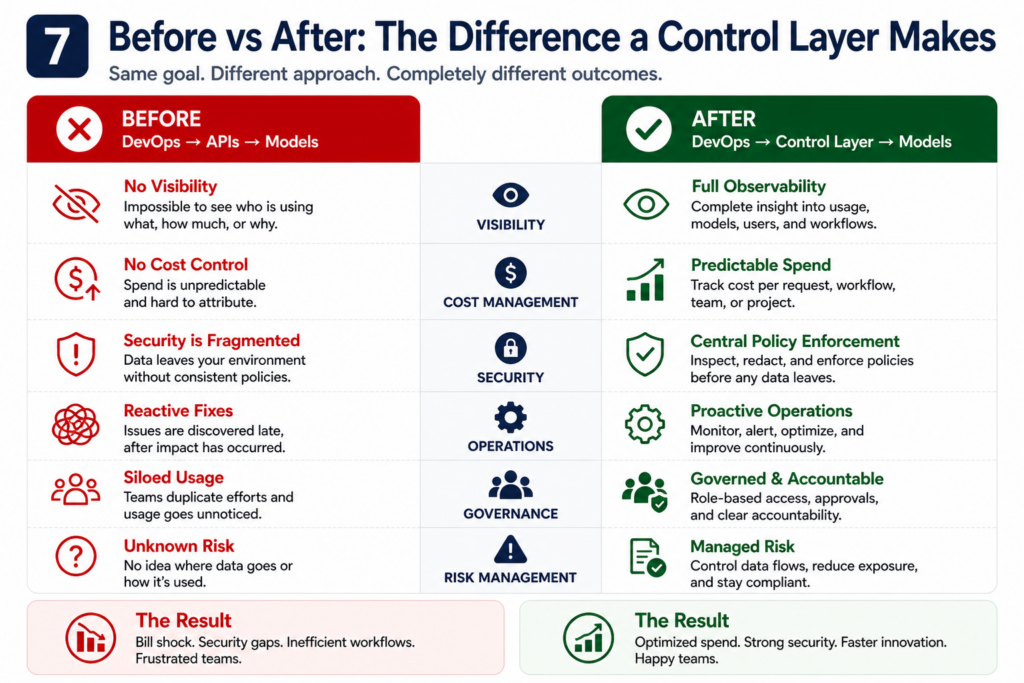

The bigger concern is not cost. It is control.

When AI models are consumed directly through APIs, security becomes fragmented. Data may leave the organisation without clear visibility. There is limited control over how that data is handled once it reaches the model. Logging exists, but it is not the same as enforcement.

There is often an assumption that if a provider offers an API, it must be secure enough. That is not how enterprise security works.

Security is about knowing where your data is, how it moves, and who can access it. When those decisions are pushed into application code across multiple teams, consistency disappears.

What you are left with is a growing attack surface that no single team fully owns.

Why infrastructure needs to take back control

This is not about slowing developers down. It is about putting the right controls in the right place.

Infrastructure teams understand how systems behave beyond the happy path. They think about failure, scale, data flow, and exposure. They design environments where risk is managed, not discovered after the fact.

AI consumption now sits directly in that domain.

Bringing control back under infrastructure means creating a consistent way to access models. It means defining how data can be used, where it can go, and how it is protected. It also means having a clear view of usage, performance, and cost across the entire estate.

Without that, organisations are effectively running AI in an uncontrolled environment.

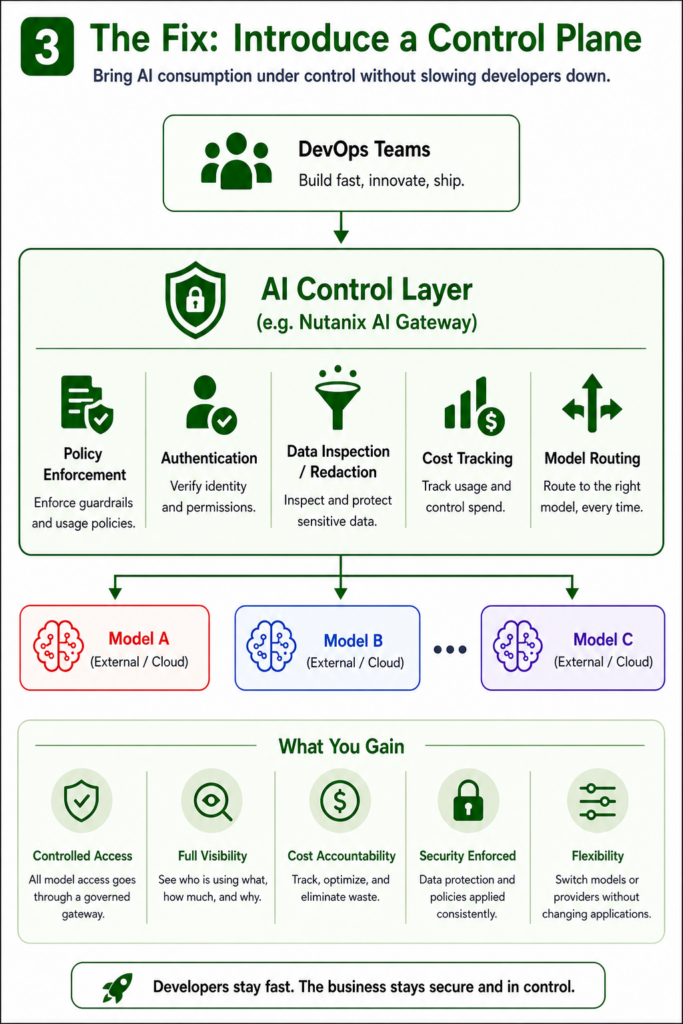

This is where platforms like Nutanix AI Gateway come into play.

Instead of every application calling external models directly, requests are routed through a central layer. That layer becomes the point where policy is enforced, access is controlled, and activity is observed.

Developers still build and ship at pace, but they do so through a governed pathway. Data can be inspected before it leaves the environment. Access can be restricted based on identity. Model usage can be tracked and optimised.

Just as importantly, organisations gain the ability to change models without rewriting applications. That flexibility becomes critical as both cost and performance pressures increase.

Just as some control starts to return, the landscape shifts again.

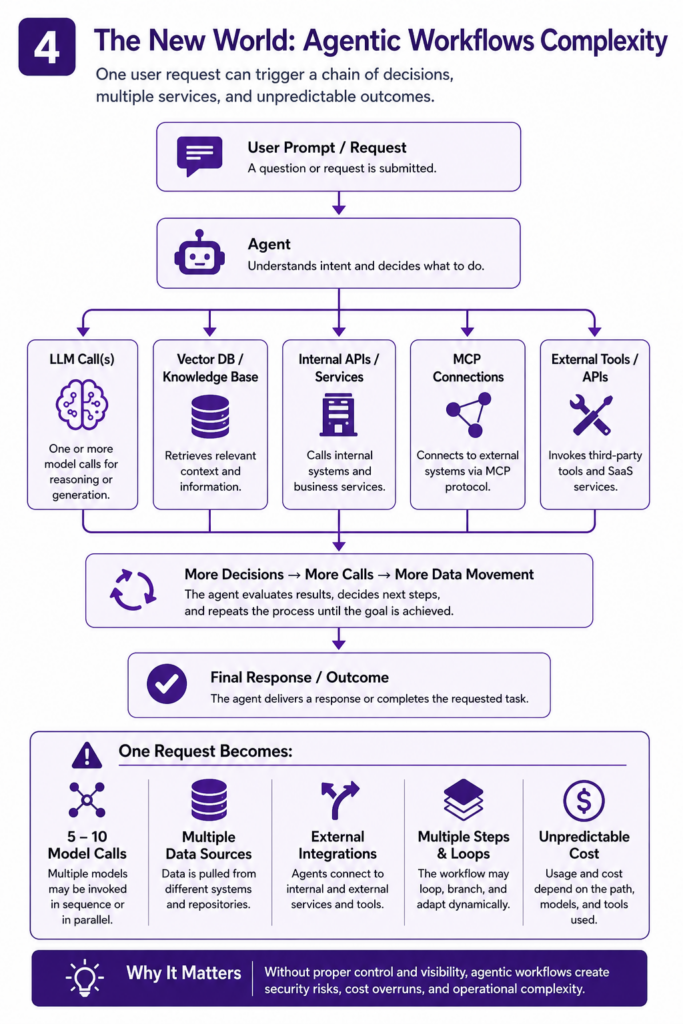

Agentic workflows introduce a new level of complexity. Instead of a single request to a single model, you now have chains of interactions. One action can trigger multiple model calls, reach into internal systems, query data stores, and connect to external tools through protocols such as MCP.

What looks like a simple request from a user can result in a cascade of activity behind the scenes.

This creates a very different problem. It is no longer about securing a single API call. It is about understanding and controlling an entire sequence of decisions and actions.

Without the right architecture, this becomes extremely difficult to manage. Data may move across boundaries multiple times. Costs can grow unpredictably. Failures can occur deep within a chain and be hard to trace back to the source.

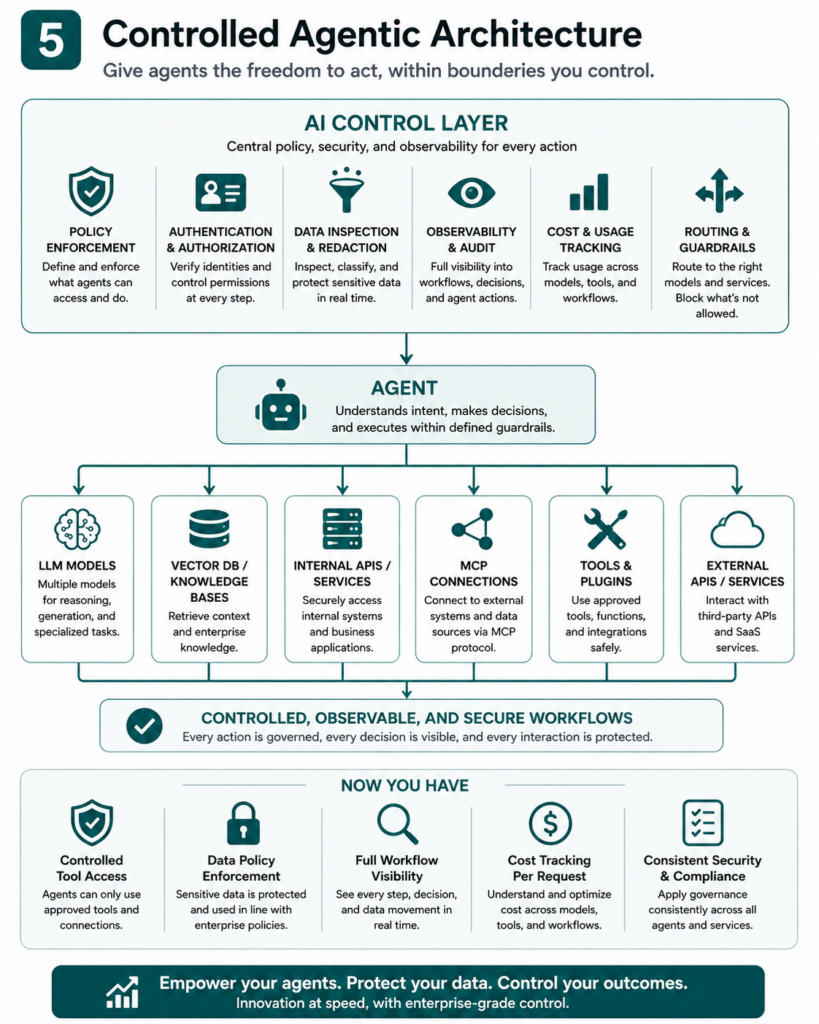

In this new model, control has to sit above the workflow, not inside it.

You need to know which tools an agent can access, what data it is allowed to use, and how far it can go in executing tasks. You also need visibility into how decisions are being made and what each step in the chain is doing.

If that sounds like an infrastructure problem, it is.

Because it requires consistent enforcement, clear boundaries, and real-time visibility across multiple systems. These are not application concerns. They are platform concerns.

There is a strong focus on getting AI into production. Far less attention is given to what happens next.

Running AI is not the same as deploying it.

Models change over time. Behaviour shifts. Costs fluctuate. Agent workflows evolve as new tools are added. What worked in testing can behave very differently at scale.

Day-2 operations is where success or failure is decided.

This is where you need to monitor usage patterns, track cost per interaction, and understand how workflows behave in real conditions. It is where you detect drift, identify inefficiencies, and ensure that policies are still being followed.

Without this, organisations end up reacting to problems rather than managing them.

AI is no longer just another service that developers can plug into. It is a core part of the infrastructure stack, whether organisations treat it that way or not.

DevOps has enabled incredible speed and innovation. That does not change. But the consumption of AI needs structure, visibility, and control.

Infrastructure teams are best placed to provide that foundation. Platforms like Nutanix AI Gateway offer a way to balance innovation with governance. Agentic workflows raise the stakes, making that balance even more important.

The organisations that get this right will not be the ones that move fastest in the short term. They will be the ones that build systems they can actually operate, secure, and afford over time.

Because sooner or later, the bill arrives. And so do the questions.